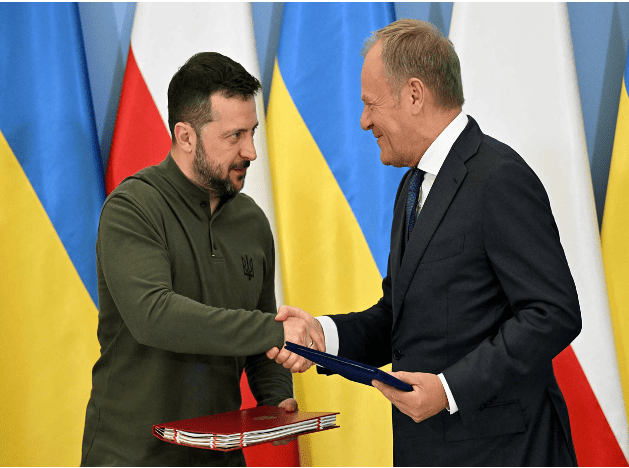

Poland and Ukraine sign bilateral security agreement

On July 8, Ukrainian President Zelensky, who was visiting Poland, and Polish Prime Minister Tusk signed a bilateral security agreement in Warsaw, the capital of Poland.

The agreement clearly states that Poland will provide support to Ukraine in air defense, energy security and reconstruction. After signing the agreement, Tusk said that the agreement includes actual bilateral commitments, not "empty promises."

Previously, the United States, Britain, France, Germany and other countries as well as the European Union signed similar agreements with Ukraine.

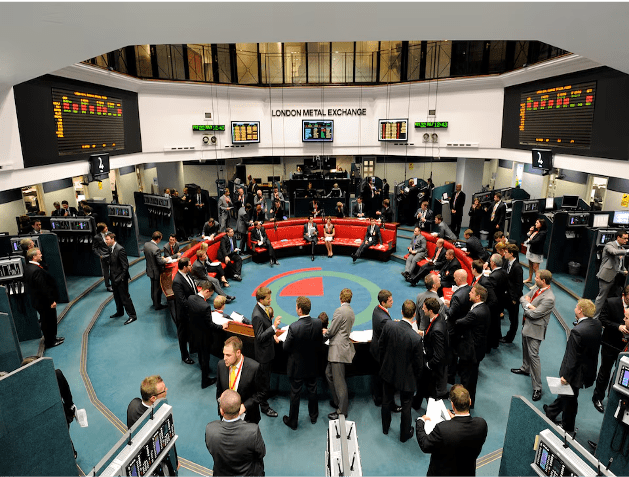

Hedge fund Elliott challenges court verdict it lost against LME on nickel

LONDON, July 9 (Reuters) - U.S.-based hedge fund Elliott Associates on Tuesday urged a London court to overturn a verdict supporting the London Metal Exchange's (LME) cancellation of nickel trades partly because the exchange failed to disclose documents. The LME annulled $12 billion in nickel trades in March 2022 when prices shot to records above $100,000 a metric ton in a few hours of chaotic trade. Elliott and market maker Jane Street Global Trading brought a case demanding a combined $472 million in compensation, alleging at a trial in June last year that the 146-year-old exchange had acted unlawfully. London's High Court ruled last November that the LME had the right to cancel the trades because of exceptional circumstances, and was not obligated to consult market players prior to its decision. Lawyers for Elliott told London's Court of Appeal that the LME belatedly released documents in May detailing its "Kill Switch" and "Trade Halt" internal procedures. It also newly disclosed an internal report that Elliott said detailed potential conflicts of interest at the exchange. "It was troubling that one gets disclosure out of the blue in the Court of Appeal for the first time," Elliott lawyer Monica Carss-Frisk told the court. Jane Street Global did not appeal the ruling. "If we had had them (documents) in the proceedings before the divisional court, we may well have sought permission to cross examine." LME lawyers said the new documents were not relevant. "The disclosed documents do not affect the reasoning of the divisional court or the merits of the arguments on appeal," the exchange said in documents prepared for the appeal hearing. "Elliott's appeal is largely a repetition of the arguments which were advanced, and rightly rejected." The LME said it had both the power and a duty to unwind the trades because a record $20 billion in margin calls could have led to at least seven clearing members defaulting, systemic risk and a potential "death spiral". Elliott said the ruling diluted protection provided by the Human Rights Act and also wrongly concluded the LME had the power to cancel the trades.

Israeli strike kills 16 at Gaza school, military says it targeted gunmen

CAIRO/GAZA, July 6 (Reuters) - At least 16 people were killed in an Israeli strike on a school sheltering displaced Palestinian families in central Gaza on Saturday, the Palestinian health ministry said, in an attack Israel said had targeted militants. The health ministry said the attack on the school in Al-Nuseirat killed at least 16 people and wounded more than 50. The Israeli military said it took precautions to minimize risk to civilians before it targeted the gunmen who were using the area as a hideout to plan and carry out attacks against soldiers. Hamas denied its fighters were there. At the scene, Ayman al-Atouneh said he saw children among the dead. "We came here running to see the targeted area, we saw bodies of children, in pieces, this is a playground, there was a trampoline here, there were swing-sets, and vendors," he said. Mahmoud Basal, spokesman of the Gaza Civil Emergency Service, said in a statement that the number of dead could rise because many of the wounded were in critical condition. The attack meant no place in the enclave was safe for families who leave their houses to seek shelters, he said. Al-Nuseirat, one of Gaza Strip's eight historic refugee camps, was the site of stepped-up Israeli bombardment on Saturday. An air strike earlier on a house in the camp killed at least 10 people and wounded many others, according to medics. In its daily update of people killed in the nearly nine-month-old war, the Gaza health ministry said Israeli military strikes across the enclave killed at least 29 Palestinians in the past 24 hours and wounded 100 others.

The Apple Watch is reportedly getting a birthday makeover

Apple is planning to revamp its smartwatch as its 10th birthday nears. The improvements include larger displays and thinner builds, Bloomberg reported. The revamped watches may also get a new chip, which could enable some AI enhancements. The Apple Watch is about to turn 10, so Apple is planning a birthday revamp, including larger displays and thinner builds, Bloomberg reported. Both versions of the new Series 10 watches will have screens similar to the large displays found on the Apple Watch Ultra, the report said. The revamped watches are also expected to contain a new chip that may permit some AI enhancements later on. Last month, Apple pulled back the curtain on its generative-AI plans with Apple Intelligence. Advertisement It hopes the artificial-intelligence features will prove alluring enough to persuade consumers to buy new Apple products. The announcement has been generally well received by Wall Street. Dan Ives of Wedbush Securities wrote in a Monday note that the "iPhone 16 AI-driven upgrade could represent a golden upgrade cycle for Cupertino." "We believe AI technology being introduced into the Apple ecosystem will bring monetization opportunities on both the services as well as iPhone/hardware front and adds $30 to $40 per share," he added. Apple's stock closed on Friday at just over $226 a share, up 22% this year and valuing the company at $3.47 trillion. That puts it just behind Microsoft, which was worth $3.48 trillion at Friday's close. The tech giants have been vying for the title of the world's most valuable company in recent months — with the chipmaker Nvidia briefing claiming the crown last month. Apple also announced some software updates for the watch at its Worldwide Developers Conference last month. The latest version of the device's software, watchOS 11, emphasizes fitness and health, introducing tools that allow users to rate workouts and adjust effort ratings. WatchOS 11 will also use machine learning to curate the best photos for users' displays. Apple has previously used product birthdays to release new versions of devices. The iPhone X's release marked the 10th anniversary of the smartphone. However, it's not clear exactly when Apple plans to release the revamped watches, Bloomberg said. The company announced the Apple Watch in September 2014, with CEO Tim Cook calling it "the most personal product we've ever made." Apple did not immediately respond to a request for comment made outside normal working hours.

How China can transform from passive to active amid US chip curbs

On Monday, executives from the three major chip giants in the US - Intel, Qualcomm, and Nvidia - met with US officials, including Antony Blinken, to voice their opposition to the Biden administration's plan of imposing further restrictions on chip sales to Chinese companies and investments in China. The Semiconductor Industry Association also released a similar statement, opposing the exclusion of US semiconductor companies from the Chinese market. First of all, we mustn't believe that the appeals of these companies and industry associations will collectively change the determination of US political elites to stifle China's progress. These US elites are very fearful of China's rapid development, and they see "chip chokehold" as a new discovery and a successful tactic formed under US leadership and with the cooperation of allies. Currently, the chip industry is the most complex technology in human history, with only a few companies being at the forefront. They are mainly from the Netherlands, Taiwan island, South Korea, and Japan, most of which are in the Western Pacific. These countries and regions are heavily influenced by the US. Although these companies have their own expertise, they still use some American technologies in their products. Therefore, Washington quickly persuaded them to form an alliance to collectively prevent the Chinese mainland from obtaining chips and manufacturing technology. Washington is proud of this and wants to continuously tighten the noose on China. The New York Times directly titled an article "'An Act of War': Inside America's Silicon Blockade Against China, " in which an American AI expert, Gregory Allen, publicly claimed that this is an act of war against China. He further stated that there are two dates that will echo in history from 2022: The first is February 24, when the Russia-Ukraine conflict broke out, and the second is October 7, when the US imposed a sweeping set of export controls on selling microchips to China. China must abandon its illusions and launch a challenging and effective counterattack. We already have the capability to produce 28nm chips, and we can use "small chip" technology to assemble small semiconductors into a more powerful "brain," exploring 14nm or even 7nm. Additionally, China is the world's largest commercial market for commodity semiconductors. Last year, semiconductor procurement in China amounted to $180 billion, surpassing one-third of the global total. In the past, China had been faced with the choice between independent innovation and external purchases. Due to the high returns from external purchases, it is easy for it to become the overwhelming choice over independent research and development. However, now the US is gradually blocking the option of external purchases, and China has no strategic choice but to independently innovate, which in turn puts tremendous pressure on American companies. Scientists generally expect that, although China may take some detours, such as recently apprehending several company leaders who fraudulently obtained subsidies from national semiconductor policies, China has the ability to gradually overcome the chip difficulties. And we will form our own breakthroughs and industrial chain, which is expected to put quite a lot of pressure on US companies. If domestic firms acquire half of China's $180 billion per year in chip acquisitions, this would provide a significant boost for the industry as a whole and help it advance steadily. The New York Times refers to the battle on chips as a bet by Washington. "If the controls are successful, they could handicap China for a generation; if they fail, they may backfire spectacularly, hastening the very future the United States is trying desperately to avoid," it argued. Whether it is a war or a game, when the future is uncertain, what US companies hope for most of all is that they can sell simplified versions of high-end chips to China, so that the option of external purchases by China continues to exist and remains attractive. This can not only maintain the interests of the US companies, enabling them to obtain sufficient funds to develop more advanced technologies, but also disrupt China's plans for independent innovation. This idea is entirely based on their own commercial interests and also has a certain political and national strategic appeal. Hence, there is no shortage of supporters within the US government. US Secretary of the Treasury Janet Yellen seems to be one of them, as she has repeatedly stated that the US' restrictions on China will not "fundamentally" hurt China, but will only be "narrowly targeted." The US will balance its strict suppression on China from the perspective of maintaining its technological hegemony, while also leaving some room for China, in order to undermine China's determination to counterattack in terms of independent innovation. China needs to use this mentality of the US to its advantage. On the one hand, China should continue to purchase US chips to maintain its economic fundamentals, and on the other hand, it should firmly support the development of domestic semiconductor companies from both financial and market perspectives. If China were to continue relying on exploiting the gaps in US chip policies in the long term, akin to a dependency on opium, it would only serve to weaken China further as it becomes increasingly addicted. China's market is extremely vast, and its innovation capabilities are generally improving and expanding. Although the chip industry is highly advanced, if there is one country that can win this counterattack, it is China. As long as we resolutely continue on the path of independent innovation, this road will definitely become wider. Various breakthroughs and turning points that are unimaginable today may soon occur.

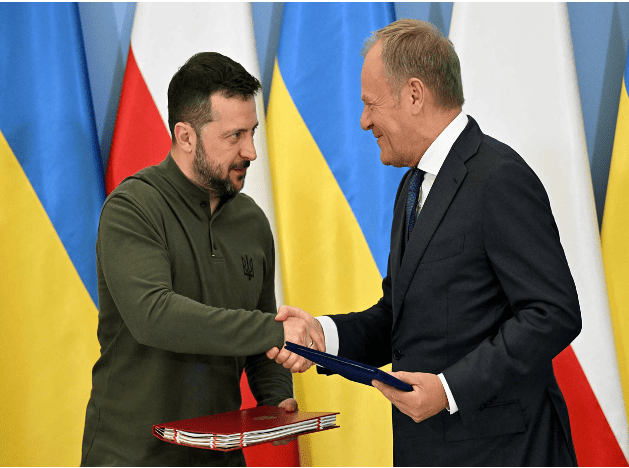

Poland and Ukraine sign bilateral security agreement

On July 8, Ukrainian President Zelensky, who was visiting Poland, and Polish Prime Minister Tusk signed a bilateral security agreement in Warsaw, the capital of Poland. The agreement clearly states that Poland will provide support to Ukraine in air defense, energy security and reconstruction. After signing the agreement, Tusk said that the agreement includes actual bilateral commitments, not "empty promises." Previously, the United States, Britain, France, Germany and other countries as well as the European Union signed similar agreements with Ukraine.