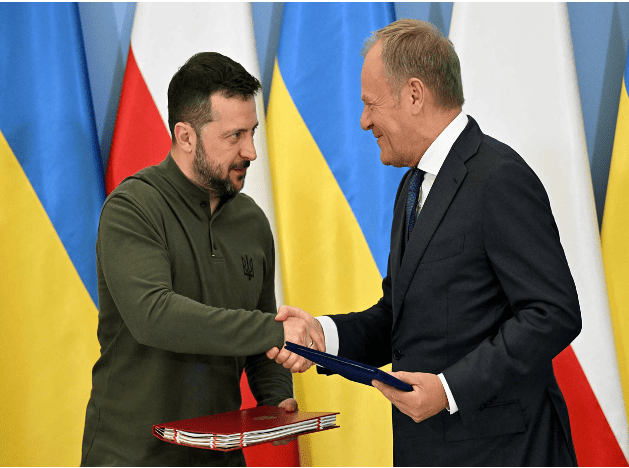

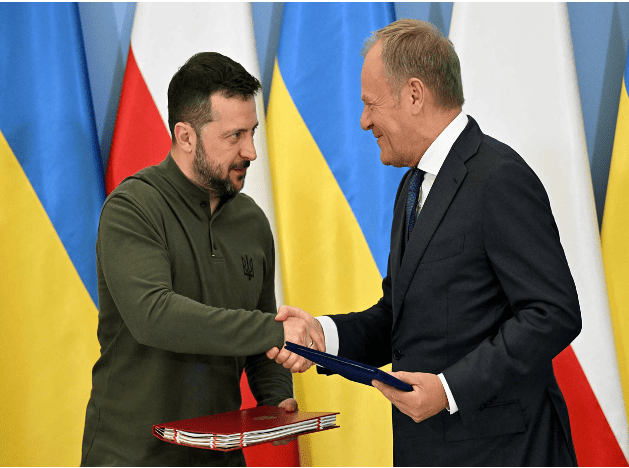

Poland and Ukraine sign bilateral security agreement

On July 8, Ukrainian President Zelensky, who was visiting Poland, and Polish Prime Minister Tusk signed a bilateral security agreement in Warsaw, the capital of Poland. The agreement clearly states that Poland will provide support to Ukraine in air defense, energy security and reconstruction. After signing the agreement, Tusk said that the agreement includes actual bilateral commitments, not "empty promises." Previously, the United States, Britain, France, Germany and other countries as well as the European Union signed similar agreements with Ukraine.

Wto: Members have more trade promotion measures than restrictions

The latest trade monitor released recently by the World Trade Organization shows that between mid-October 2023 and mid-May 2024, WTO members continued to introduce more trade promotion measures than trade restrictive measures. The WTO said it was an important signal of members' commitment to keep trade flowing amid the current geopolitical uncertainty. According to WTO statistics, during the monitoring period, WTO members adopted 169 trade promotion measures on commodities, more than the 99 trade restrictive measures introduced. Most of the measures are aimed at imports. Commenting on the findings, WTO Director-General Ngozi Okonjo-Iweala said that despite the challenging geopolitical environment, this latest trade monitoring report highlights the resilience of world trade. Even against the backdrop of rising protectionist pressures and signs of economic fragmentation, governments around the world are taking meaningful steps to liberalize and boost trade. This demonstrates the benefits of trade on people's purchasing power, business competitiveness and price stability. The WTO monitoring also identified significant new developments in economic support measures. Subsidies as part of industrial policy are increasing rapidly, especially in areas related to climate change and national security.

China will reach climate goal while West falls short

There has been constant low-level sniping in the West against China's record on climate change, in particular its expansion of coal mining, and its target of 2060 rather than 2050 for carbon zero. I have viewed this with mild if irritated amusement, because when it comes to results, then China, we can be sure, will deliver and most Western countries will fall short, probably well short. It is now becoming clear, however, that we will not have to wait much longer to judge their relative performances. The answer is already near at hand. We now know that in 2023 China's share of renewable energy capacity reached about 50 percent of its total energy capacity. China is on track to shatter its target of installing 1200GW of solar and wind energy capacity by 2030, five years ahead of schedule. And international experts are forecasting that China's target of reaching peak CO2 emissions by 2030 will probably be achieved ahead of schedule, perhaps even by a matter of years. Hitherto, China has advisedly spoken with a quiet voice about its climate targets, sensitive to the fact that it has become by far the world's largest CO2 emitter and aware that its own targets constituted a huge challenge. Now, however, it looks as if China's voice on global warming will carry an authority that no other nation will be able to compete with. There is another angle to this. China is by far the biggest producer of green tech, notably EVs, and renewable energy, namely solar photovoltaics and wind energy. Increasingly China will be able to export these at steadily reducing prices to the rest of the world. The process has already begun. It leaves the West with what it already sees as a tricky problem. How can it become dependent on China for the supply of these crucial elements of a carbon-free economy when it is seeking to de-risk (EU) or decouple (US) its supply chains from China? Climate change poses the greatest risk to humanity of all the issues we face today. There are growing fears that the 1.5-degree Celsius target for global warming will not be met. 2023 was the hottest year ever recorded. Few people are now unaware of the grave threat global warming poses to humanity. This requires the whole world to make common cause and accept this as our overarching priority. Alas, the EU is already talking about introducing tariffs to make Chinese EVs more expensive. And it is making the same kind of noises about Chinese solar panels. The problem is this. Whether Europe likes it or not, it needs a plentiful supply of Chinese EVs and solar panels if it is to reduce its carbon emissions at the speed that the climate crisis requires. According to the International Energy Authority, China "deployed as much solar capacity last year as the entire world did in 2022 and is expected to add nearly four times more than the EU and five times more than the US from 2023-28." The IEA adds, "two-thirds of global wind manufacturing expansion planned for 2025 will occur in China, primarily for its domestic market." In other words, willy-nilly, the West desperately needs China's green tech products. Knee-jerk protectionism demeans Europe; it is a petty and narrow-minded response to the greatest crisis humanity has ever faced. Instead of seeking to resist or obstruct Chinese green imports, it should cooperate with China and eagerly embrace its products. As a recent Financial Times editorial stated: "Beijing's green advances should be seen as positive for China, and for the world." The climate crisis is now in the process of transforming the global political debate. Hitherto it seemed relatively disconnected. That period is coming to an end. China's dramatic breakthrough in new green technologies is offering hope not just to China, but to the whole world, because China will increasingly be able to supply both the developed and developing world with the green technology needed to meet their global targets. Or, to put it another way, it looks very much as if China's economic and technological prowess will play a crucial role in the global fight against climate change. We should not be under any illusion about the kind of challenge humanity faces. We are now required to change the source of energy that powers our societies and economies. This is not new. It has happened before. But previously it was always a consequence of scientific and technological discoveries. Never before has humanity been required to make a conscious decision that, to ensure its own survival, it must adopt new sources of energy. Such an unprecedented challenge will fundamentally transform our economies, societies, cultures, technologies, and the way we live our lives. It will also change the nature of geopolitics. The latter will operate according to a different paradigm, different choices, and different priorities. The process may have barely started, but it is beginning with a vengeance. Can the world rise to the challenge, or will it prioritize petty bickering over the vision needed to save humanity? On the front line, mundane as it might sound, are EVs, wind power, and solar photovoltaics. The author is a visiting professor at the Institute of Modern International Relations at Tsinghua University and a senior fellow at the China Institute, Fudan University. Follow him on X @martjacques.

Australia pledges to provide more funds to Pacific island banks to counter China's influence

Australia pledged on Tuesday to increase investment in Pacific island nations, offering A$6.3 million ($4.3 million) to support their financial systems. Some Western banks are cutting ties with the region because of risk factors, while China is trying to increase its influence there. Some Western bankers have terminated long-standing banking relationships with small Pacific nations, while others are considering closing operations and restricting access to dollar-denominated bank accounts in those countries. "We know that the Pacific is the fastest-moving region in the world for correspondent banking services," Australian Treasurer Jim Chalmers said in a speech at the Pacific Banking Forum in Brisbane. "What's at stake here is the Pacific's ability to engage with the world," he said, with much of the region at risk of being cut off from the global financial system. Chalmers said Australia would provide A$6.3 million ($4.3 million) to the Pacific to develop secure digital identity infrastructure and strengthen compliance with anti-money laundering and counter-terrorist financing requirements. Experts say Western banks are de-risking to meet financial regulations, making it harder for them to do business in Pacific island nations, where compliance standards sometimes lag, undermining their financial resilience. Australia's ANZ Bank is in talks with governments about how to make its Pacific island businesses more profitable amid concerns about rising Chinese influence as financial services leave the West, Chief Executive Shayne Elliott said Tuesday. ANZ is the largest bank in the Pacific region, with operations in nine countries, though some of those businesses are not financially sustainable, Elliott said in an interview on the sidelines of the forum. "If we were there purely for commercial purposes, we would have closed it a long time ago," he said. Western countries, which have traditionally dominated the Pacific, are increasingly concerned about China's plans to expand its influence in the region after it signed several major defense, trade and financial agreements with the region. Bank of China signed an agreement with Nauru this year to explore opportunities in the country, following Australia's Bendigo Bank saying it would withdraw from the country. Mr. Chalmers said Australia was working with Nauru to ensure that banking services in the country could continue. ANZ Bank exited its retail business in Papua New Guinea in recent years, while Westpac considered selling its operations in Fiji and Papua New Guinea but decided to keep them. The Pacific lost about 80% of its correspondent banking relationships for dollar-denominated services between 2011 and 2022, Australian Assistant Treasurer Stephen Jones told the forum, which was co-hosted by Australia and the United States. “We would be very concerned if there were countries acting in the region whose primary objective was to advance their own national interests rather than the interests of Pacific island countries,” Mr. Jones said on the first day of the forum in Brisbane. He made the comment when asked about Chinese banks filling a vacuum in the Pacific. Meanwhile, Washington is stepping up efforts to support Pacific island countries in limiting Chinese influence. "We recognize the economic and strategic importance of the Pacific region, and we are committed to deepening engagement and cooperation with our allies and partners to enhance financial connectivity, investment and integration," said Brian Nelson, U.S. Treasury Undersecretary for Counterterrorism and Financial Intelligence. The United States is aware of the problem of Western banks de-risking in the Pacific region and is committed to addressing it, Nelson told the forum's participants. He said data showed that the number of correspondent banking relationships in the Pacific region has declined at twice the global average rate over the past decade, and the World Bank and the Asian Development Bank are developing plans to improve correspondent banking relationships. U.S. Treasury Secretary Janet Yellen said in a video address to the forum on Monday (July 8) that the United States is focused on supporting economic resilience in the Pacific region, including by strengthening access to correspondent banks. She said that when President Biden and Australian Prime Minister Anthony Albanese met at the White House last year, they particularly emphasized the importance of increasing economic connectivity, development and opportunities in the Pacific region, and a key to achieving that goal is to ensure that people and businesses in the region have access to the global financial system.

When Amazon also started upgrading "refund only"

Amazon official said that the freight from the Chinese warehouse will be lower than the traditional FBA(Fulfillment by Amazon) fee, similar to the domestic air delivery small package service, which will undoubtedly greatly reduce the logistics costs of sellers. In addition to logistics, Amazon is also responsible for promotion and traffic, of course, sellers can still independently carry out product advertising, pricing and promotion activities, to maintain the personalized and independent brand. Many industry insiders said that Amazon launched the "low-price store" move to fight China's cross-border e-commerce platforms Temu, Shein, AliExpress and so on. Although it provides another platform for China's e-commerce to go to sea, many sellers said that the cost of settling in Amazon cross-border e-commerce has become lower, and they have asked about the conditions of settling in, but the rules look down, in fact, it is not so friendly for sellers.