In a groundbreaking discovery, researchers from the Xinjiang Institute of Ecology and Geography of the Chinese Academy of Sciences have found a desert moss species, known as Syntrichia caninervis, that has the potential to survive in the extreme conditions on Mars.

The Global Times learned from the institute that during the third Xinjiang scientific expedition, the research team focused on studying the desert moss and found that it not only challenges people's understanding of the tolerance of organisms in extreme environments, but also demonstrates the ability to survive and regenerate under simulated Martian conditions.

Supported by the Xinjiang scientific expedition project, researchers Li Xiaoshuang, Zhang Daoyuan and Zhang Yuanming from the Xinjiang Institute of Ecology and Geography and Kuang Tingyun, an academician from the Chinese Academy of Sciences, concentrated on studying the "pioneer species" Syntrichia caninervis in an extreme desert environment, according to the institute in an article it sent to the Global Times on Sunday.

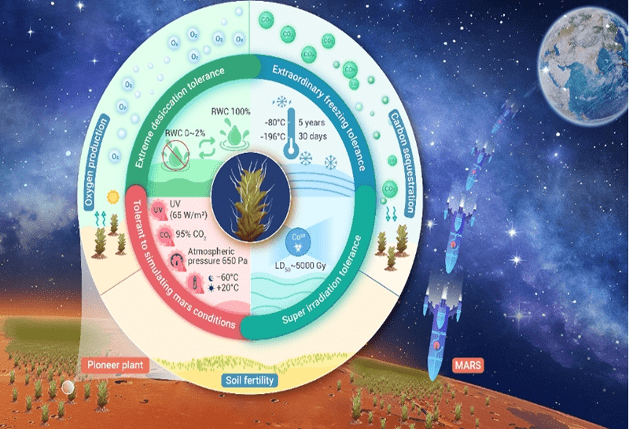

Through scientific experiments, the researchers systematically proved that the moss can tolerate over 98 percent cell dehydration, survive at temperatures as low as -196 C without dying, withstand over 5000Gy of gamma radiation without perishing, and quickly recover, turn green, and resume growth, showcasing extraordinary resilience.

These findings push the boundaries of human knowledge on the tolerance of organisms in extreme environments.

Furthermore, the research revealed that under simulated Martian conditions with multiple adversities, Syntrichia caninervis can still survive and regenerate when returned to suitable conditions. This marks the first report of higher plants surviving under simulated Martian conditions.

The research team also identified unique characteristics of Syntrichia caninervis. Its overlapping leaves reduce water evaporation, while the white tips of the leaves reflect intense sunlight. Additionally, the innovative "top-down" water absorption mode of the white tips efficiently collects and transports water from the atmosphere. Moreover, the moss can enter a selective metabolic dormancy state in adverse environments and rapidly provide the energy needed for recovery when its surrounding environment improves.

Based on the extreme environmental tolerance of Syntrichia caninervis, the research team plans to conduct experiments on spacecraft to monitor the survival response and adaptation capabilities of the species under microgravity and various ionizing radiation adversities. They aim to unravel the physiological and molecular basis of the moss and explore the key life tolerance regulatory mechanisms, laying the foundation for future applications of Syntrichia caninervis in outer space colonization.